TL;DR: This site does not and will not use any form of "AI"-generated content, partially for the low-quality and questionable legality, but primarily out of (massive) ethical concerns.

---

This page documents the use of any form of "AI", such as text generation, image generation, and code generation, among others. This page will begin with a clarification on the phrase "AI", followed by showing the ethical/legal problems of the technology, starting with a generalised assumption and getting more specific to the current (as of writing) level of "AI" technology.

Note that this page is not legal advice or something that a lawyer has confirmed to be legally accurate; this is just my interpretation of the law that may or may not be accurate.

The current state of "AI" technology could not possibly be considered "intelligent". In its current state, the technology is best named a "bullshit machine", spewing out text as if it "knows" what its saying. See the following paper for more information:

For the remainder of this page, I will use "artificial intelligence" to refer to computer that can perfectly or near-perfectly simulate a brain (as in, actual artificial intelligence) and "bullshit machine" to refer to our current level of content generation technology.

If a human were to look at a copyrighted work, that would be fine. A human could look at thousands of copyrighted works, amass their own views of society, and start writing their own stuff. It would be completely infeasible for a human to be expected to site every single copyrighted work they have ever read whenever they write something.

The important difference between a human and a bullshit machine is that a human is actually thinking about what they've learned and providing original insight and knowledge. A bullshit machine is just regurgitating the average of what it has scraped.

If a human were to memorise or otherwise copy an entire copyrighted work and claim it as their own, however, that would (reasonably) be considered plagiarism and a violation of copyright. This should also be the case with artificial intelligence.

A hypothetical computer that can simulate a human brain and provide its own unique insights, separate from what copyrighted works it has "learned", it should, all other things the same, be interchangeable with humans (legally).

The one, highly important difference between this hypothetical "artificial intelligence" and a real computer is that, as of now (at least in the USA), copyright can only be assigned to humans. This means that all works generated entirely be a computer are (as of writing) in the public domain. Since the "artificial intelligence" is still providing actual insight, I personally believe that no violation of copyright has occured.

Bullshit machines, because of the way they are built, do not have the ability to provide their own unique insight. All that bullshit machines do are randomly generate plausible sounding text, likely being the average of whatever it has been trained on.

Since bullshit machines cannot provide their own insight, this fundamentally reduces them to regurgitating copyrighted works that it has "learned".

Since a bullshit machine is (obviously) not a human, the works it produces are public domain. Since, in general, you are not allowed to distribute a copyrighted work under a less restrictive license (only more restrictive), and since the bullshit machine both 1) does not provide any insights that could be copyrightable, and 2) outputs work that is required to be in the public domain anyway, it would/should be copyright infringement for the bullshit machine to be "trained" on works that are not already in the public domain.

Since it is reasonable to deem using copyrighted work to train a bullshit machine to be copyright infringement, any nonconsentual use of copyrighted work to do so should constitute as infringement. However, there have been many cases where companies have done this exact thing, leading to whole new classes of bot-prevention programs, such as go-away and iocaine. Therefore, supporting bullshit machines, as well as companies that promote/distribute them, would be endorsing this highly unethical (and probably illegal) practice.

"Training" these bullshit machines takes an incredibly large amount of resources. Parsing over billions of works takes a considerable amount of energy, destroying the environment. Even running the machines takes a considerable amount of energy. I could not be comfortable supporting technology that contributes this much to climate change.

I cannot possibly use this destructive, highly unethical, climate incinerating technology, ever, without feeling bad for myself. I am highly committed, and if you don't, for whatever reason, believe me, I have one more thing:

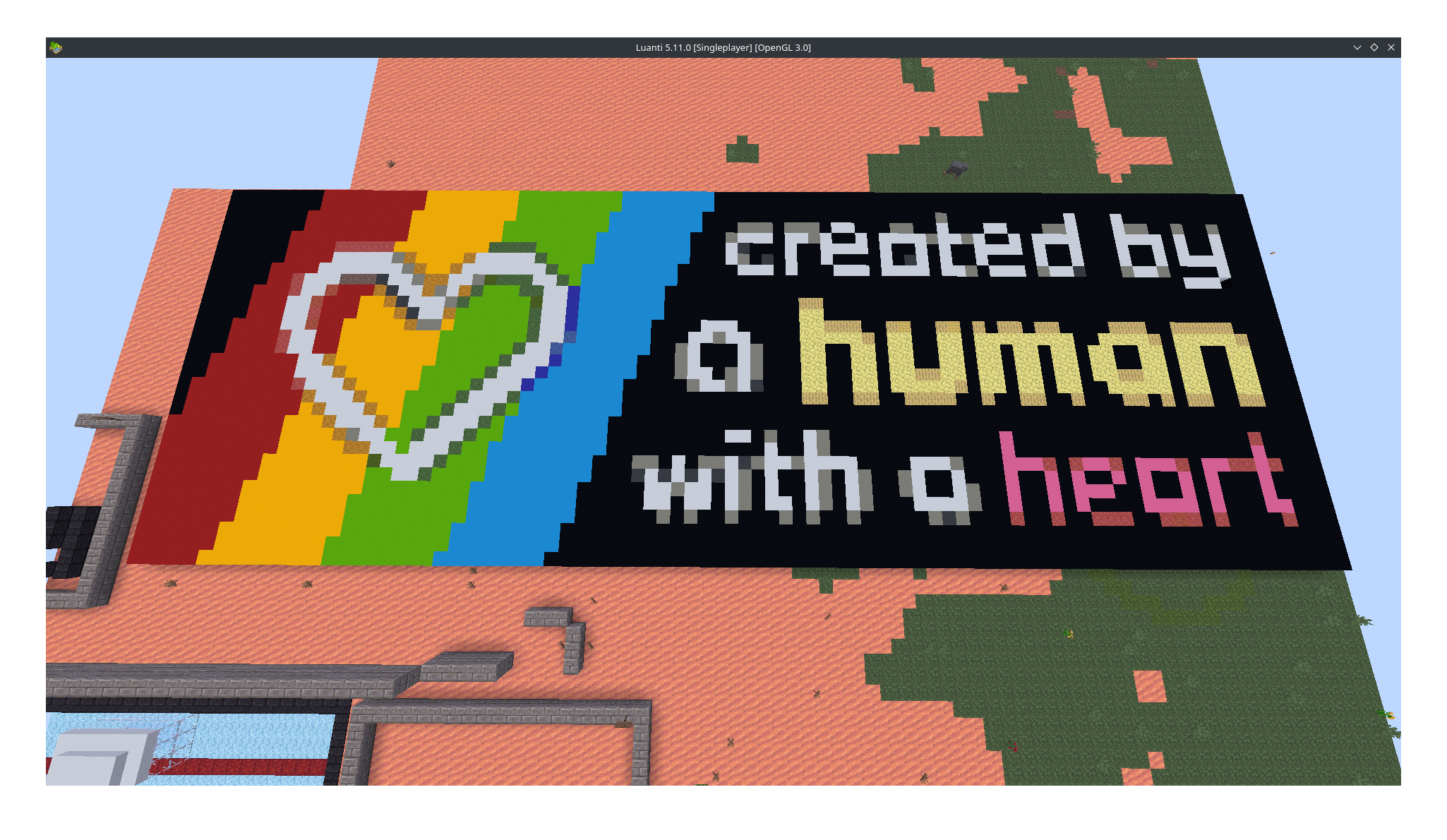

This is a picture of a flat world I have in Mineclonia on Luanti. This is a block-for-pixel accurate version of one of Cadence's "Created by a human" badges that I built, by hand, in this world.

I am that committed.

"Created by a human" badges for reference

Please stop externalizing your costs directly into my face (Drew Devault)

Baccyflap's No AI [sic] Webring, which I am a proud member of

The "Awful AI" [sic] GitHub repository